Explorer of Monte-Carlo based Algorithms

Explorer of Monte-Carlo based Algorithms can be used to explore, analyze or debug path tracing algorithms based on Monte Carlo Integration.

Update 2021

EMCA has been accepted and published officially at VMV 2021: https://diglib.eg.org/handle/10.2312/vmv20211377

@inproceedings {10.2312:vmv.20211377,

booktitle = {Vision, Modeling, and Visualization},

editor = {Andres, Bjoern and Campen, Marcel and Sedlmair, Michael},

title = {{EMCA: Explorer of Monte Carlo based Algorithms}},

author = {Ruppert, Lukas and Kreisl, Christoph and Blank, Nils and Herholz, Sebastian and Lensch, Hendrik P. A.},

year = {2021},

publisher = {The Eurographics Association},

ISBN = {978-3-03868-161-8},

DOI = {10.2312/vmv.20211377}

}

EMCA — Explorer of Monte-Carlo based Algorithms

EMCA is a framework for the visualization of Monte Carlo-based algorithms. More precisely it is designed to visualize and analyze unidirectional path tracing algorithms. The framework consists of two parts, a server part which serves as an interface for the respective rendering system and a client which takes over the pure visualization. The client is written in Python and can be easily extended. EMCA works on a pixel basis which means that instead of pre-computing and saving all the necessary data of the whole rendered image during the render process everything is calculated directly at run-time. The data is collected and generated according to the selected pixel by the user.

This framework was developed as Master thesis 03/2019 at the University of Tübingen (Germany). Special thanks goes to Prof. Hendrik Lensch, Sebastian Herholz (supervisor), Tobias Rittig and Lukas Ruppert who made this work possible.

Since the release of this master thesis some changes have been applied so that it can now be published as a more or less alpha version and in a quite stable state. The primary goal of this framework is to support other developers and especially Universities researching on rendering algorithms based on Monte-Carlo. Furthermore it should give the impulse to implement further ideas and improvements to provide an ongoing development of EMCA.

Currently this framework only runs on Linux systems. It was tested and developed on Ubuntu 16.04, 18.04 and 19.10.

- Source Code: https://github.com/ckreisl/emca

Client

Brushing and Linking

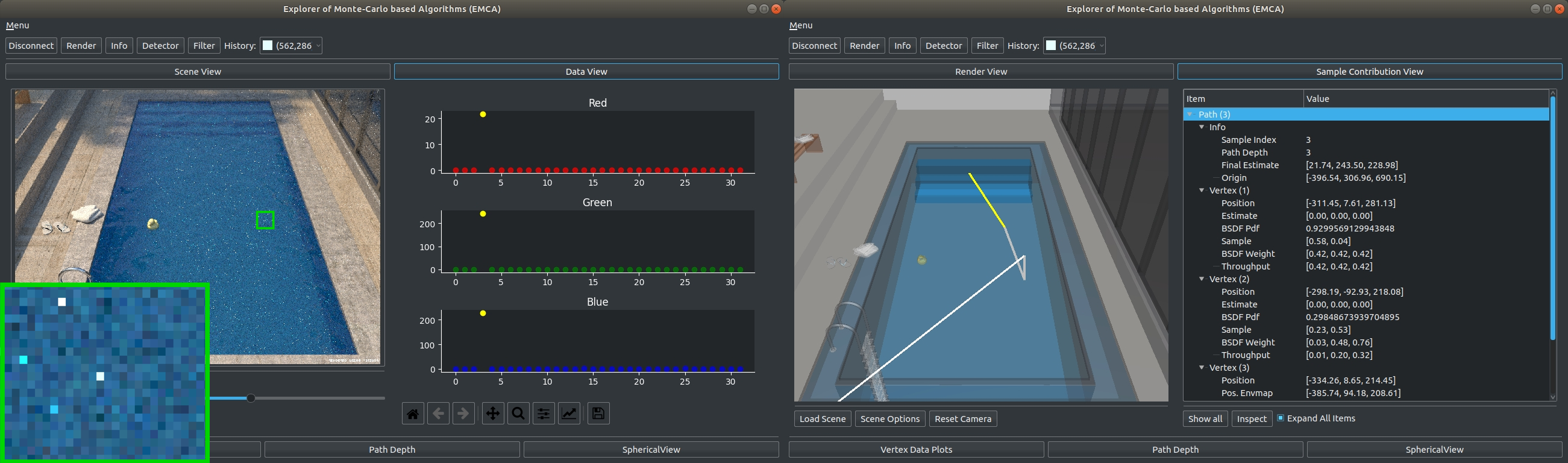

The concept of brushing and linking is to connect multiple views within a GUI representing different parts of the same data. Selecting a path or intersection in any view will automatically select the same path or intersection in all other views including the (custom) tools presented shortly. This approach allows to provide insight into multiple aspects of the data without cluttering a single view with all the available data. Especially the connection to the scene view provides valuable insight into the otherwise difficult to parse intersection points.

Render View

Displaying the rendered image, the render view is the starting point of any visualization task. Here, the user can select individual pixels, e.g. ones containing artifacts such as fireflies or general high-variance regions, to inspect their contributing light transport paths and look for potential errors or sources of high variance. The image can either be loaded from file, via drag’n drop or be requested from the server to render anew. On selection of a pixel, the server is queried for the corresponding path data, which can be quickly generated by the rendering system. Once this data is received, it becomes available for further inspection in the following views. A history of previously selected pixels allows to quickly switch between several pixels of interest.

Sample Contribution View

The sample contribution view provides interactive scatter plots of each path’s estimate of incident radiance for the selected pixel per spectrum provided by the renderer. In the scatter plots, paths can be quickly classified into non-contributing paths, regularly contributing paths and outliers which might have caused a firefly artifact. Here, one or multiple paths can be selected for inspection. For efficient selection of a subset of paths, a rectangular selection tool is provided.

- Rectangle selection tool can be (de-)activated by pressing the ‘R’ key.

- Single paths can be added by holding down the ‘Shift’ key while selecting elements.

Scene View

The scene view allows the user to explore the selected traced paths within a semitransparent representation of the scene’s geometry. The camera is initialized to its location in the rendered image and can be moved around freely. To allow for quick selection of paths, a rectangular selection tool can be used to select individual intersections directly in the scene view. When selecting path intersections from other views, the camera is automatically moved to the selected intersection. To additionally highlight the selected intersection, its preceding path segment is highlighted in green while the intersection point is colored in orange. Regular path segments are shown in white unless they terminate in the environment map in which case they are colored in yellow. If the rendering algorithm uses next event estimation, shadow rays can be shown in blue for successful connections to the emitter and in red in case the sampled emitter is occluded.

- Rectangle selection tool can be (de-)activated by pressing the ‘R’ key.

Data View

The render data view shows all the collected data for each selected path and its vertices. Paths and their data are presented in a collapsible tree structure to the user to allow for comprehensible inspection and comparison of various paths and individual vertices. In combination with the scene view, light transport paths can be quickly analyzed by interactively stepping through the individual intersection points by simply selecting the vertices in the data view.

Custom Plugin Interface

New path tracing approaches might make use of arbitrary auxiliary data such as spherical radiance caches which might be too complex to be suitably displayed in the existing 2D and 3D intersection data plots or the textual render data view. To address the individual needs of novel path tracing algorithms, a custom tool interface allows for simple construction of additional views with access to all the available path and intersection data for the current pixel. Following the brushing and linking concept the tool will be notified of the currently selected path and intersection such that it can update its contents accordingly. Should the data collected during path tracing not suffice to satisfy the custom tool’s needs, a matching custom server module can be created from which the custom tool can easily request arbitrary additional data at any moment.

Intersection Data Plots Plugin (Core Plugin)

The intersection data plots provide an aggregated view of all user-supplied data for a single traced path. The view allows for exploring fundamental quantities and their changes during ray traversal like the Russian roulette probability or PDF. Supporting multiple data types with 2D and 3D plots for one or two dimensional data at various path depths as well as spectral plots for radiance data enables visualizing the most commonly gathered data. All plots are automatically created on-the-fly after receiving the render data from the server side.

Path Depth Plugin (Core Plugin)

The path depth view allows for analyzing traced paths according to their reached depth. Detecting paths that end too early can be essential in order to determine convergence problems of a rendered image. Terminating paths based only on their throughput may undersample important contributions of hard to reach light sources. Therefore, in combination with the per-path estimate view, one can analyze various Russian roulette criteria like ADRR and their relations between path contribution and the reached depth.

Spherical View Plugin (Custom Plugin – mitsuba)

The spherical view tool is an example of a custom tool requiring more data than initially collected during path tracing. It displays the incident radiance from all directions at the active intersection position. Computing an estimate of the incident radiance can take a considerable amount of time, depending on the selected resolution, sample count and the used integrator. Therefore, it does not make sense to pre-compute it during the path tracing step. Instead, the incident radiance is requested and rendered on-the-fly as each intersection is selected while the tool is active.

Features

High Variance Sample Detector

In Monte Carlo integration, paths should be sampled proportional to their unknown eventual contribution. To reduce the variance in the rendered image, importance sampling schemes such as the throughput-oriented BSDF-sampling are applied. However, low-probability paths encountering strong emitters will result in extreme contribution estimates manifesting in firefly artifacts. Investigating these paths provides crucial insights into remaining sources of high variance that developers of efficient path tracers aim to eliminate. Clamping and denoising can be used to remove remaining fireflies. However, such methods are unsatisfactory as they require an additional post-processing step and bias the outcome.

Often, only a single path out of hundreds of paths is responsible for producing a firefly. To ease the debugging of fireflies, a firefly detector is provided which automatically selects paths with extreme contributions on pixel selection. Paths whose contribution differs from the mean by more than two times the standard deviation are classified as outliers. As a more sophisticated approach, we also provide a second outlier detector based on the Generalized ESD for Outliers by Rosner which is more robust.

Filter

The ability to filter data by specific criteria offers more flexibility regarding the analysis of traced paths and their collected path data. Therefore, we provide a filter algorithm which allows for applying multiple filters with various filter criteria based on the path data. Users can apply one or more filter constraints which are applied in combination.

Video Demo

Link to live demonstration: https://vimeo.com/397632936

License

The software comes with the MIT license a LICENSE. Be aware of 3rd party software:

- PySide2 (LGPL)

- vtk (BSD3)

- matplotlib (BSD)

- numpy (BSD)

- six (MIT)

- scipy (BSD)

- OpenEXR (BSD)

- Pillow (HPND)

- Imath (MIT)

More information about the installation setup can be found on Github (check link below).

If you have any questions feel free to contact me.

Cheers,

Christoph Kreisl

CodevemberTeam 2020

All links collected:

Master Thesis 2019: https://github.com/ckreisl/emca/blob/readme/images/ckreisl_thesis.pdf

Github Source Code: https://github.com/ckreisl/emca

EMCA Demo Video: https://vimeo.com/397632936